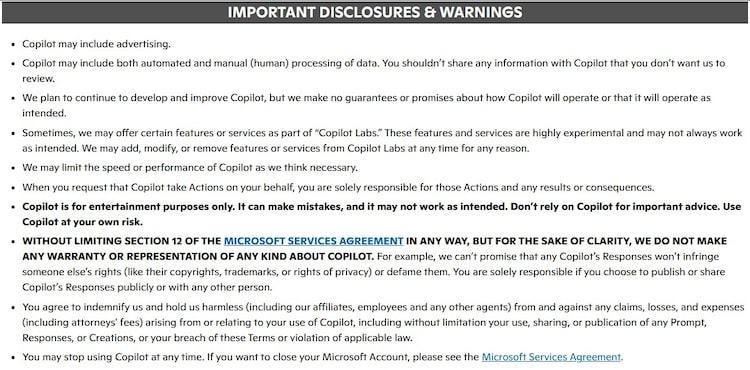

Microsoft has changed the terms for its Copilot AI. The new language is monotonous and a little timid. It says Copilot is only meant to entertain. It also informs the user to do so at their own risk, reflecting Microsoft’s shift toward Microsoft Copilot entertainment purposes messaging. It is not difficult to understand the meaning. The company now claims less of what the tool is used for.

Copilot AI is not a petty or trifling product. Copilot is embedded in the working software of Microsoft, the Excel, PowerPoint, and so on, where individuals anticipate results that they can rely upon. It has been marketed, particularly to enterprise users, as a time and effort saving measure. It will help, and sometimes even substitute, human labor. The fact that such a tool is called entertainment creates a tension, which is hard to disregard.

A Subtle Shift in Liability and Expectations

It is probably due to a simple reason. Tools such as Copilot are fallible. They do it in a manner that can be persuasive. The output can be readable, even in cases of error. Microsoft alters the burden of those errors by rewording, aligning the tool more closely with Microsoft Copilot entertainment purposes positioning. The onus is now more evident on the user. If one now assumes the tool to be reliable, the user will be held responsible.

There is also the issue of timing. According to Microsoft, it revised this in October last year. It is not until now that it has started to draw attention. This delay indicates the ease with which these changes can be transmitted without much concern even when they modify the meaning of the tool itself.

Copilot is still there but its role has been reduced. It no longer stands, at least in words, as a dependable assistant. It is made less definite. The judgment is up to the user who should determine the extent to which they can believe what it generates.

Big language models, including OpenAI GPT and Anthropic Claude, make false statements sometimes. They fail to make a clear distinction between what is known and what is invented. This practice has been undermined with time, yet it has not vanished. Microsoft appears to know this. Its updated conditions imply that there is still a warning regarding the reliability of such systems.

It is not hard to guess the intent of these terms. They put a limit on responsibility. The company does not want to incur the cost in case the system provides false or misleading information. The risk is in effect transferred to the individual who uses the tool, rather than the maker of the tool.

Still Usable for Productivity, But With Caveats

However, Microsoft does not prohibit the use of Copilot in work. It does not request users to set it aside. Rather, it delineates its role. Copilot can give suggestions, but it must not conclude. The user has to verify what it generates, particularly when the issue is of concern. This is, in fact, not exceptional. Similar warnings are provided with most of such systems. The tool provides assistance, and the ultimate decision is left to the individual who uses it.

Expansion Continues Despite the Softer Language

Microsoft has not pulled out its support to Copilot as a work tool. It still advertises it in that position. According to a report by Bloomberg, one of the senior executives of the company, Judson Althoff, was talking about the recent sales with a certain degree of satisfaction. In an internal meeting, he said that Microsoft had reached what he called “some pretty big audacious goals” in the last quarter. The tone is one of confidence, perhaps of certainty.

In January, Microsoft said that only a minor percentage of its customers had decided to pay for Copilot. As of the end of December 2025, the number was three per cent. At the same time, the company has moved to extend the system. It introduced a new tool, known as Copilot Cowork, earlier this year. This system is based on the technology of Anthropic, namely its Claude Cowork model. That model had already caused some unease among large software firms such as TCS and Infosys. It implied a change in the way some types of work could be performed.

The language around these tools is also influenced by Microsoft, reinforcing the broader narrative of Microsoft Copilot entertainment purposes. It has employed terms such as vibe working to refer to the application of AI in daily activities. The term is ambiguous, yet its meaning is obvious. This is another issue whether this language makes the reality of the work clearer or obscured.

Final words

The re-calibration of Copilot by Microsoft is more of a re-evaluation than a retreat. The promise is reduced by making the tool look more like entertainment, and the company keeps the product as part of serious processes without much noise. It is an odd juggle: efficiency in selling, but caution against blind faith; productivity, but with a warning that users must verify everything.

The practical part does not change much, people will continue to use Copilot to write, summarize, and brainstorm. What is different is the small print which now read like a polite shrug. The burden, though indirectly but squarely, lies with the user. In the meantime, Microsoft is still growing the ecosystem and pursuing adoption, which implies that confidence has not diminished, but the wording has.